Hi Thomas,

ok let me explain why I believe that all of your use cases would be better off without analytical inverse:

In General:

An analytical inverse only produces sensible scene referred data if the display referred data itself was generated by the forward transformation. Let me explain:

Let’s assume we have a forward transform that maps:

5.0 → 0.95

10.0 → 0.96

20.0 → 0.97

So a display referred image (generated by another forward transform) that has some values up there would generate highly altering and unstable scene referred data (highly unstable for further processing).

what do we lose if we have an inverse that maps:

0.95 → 4.0

0.96 → 5.0

0.97 → 6.0

We will see that the errors this inverse produces (when again viewed forward) are very small, but we gain much more robustness.

What I am trying to say is that an analytical inverse produces highly unstable image data and a slight colour inaccurate inverse transform can produce very robust data.

So to your use cases:

Would it not be better to engage with the logo designer and go through the process of creating a real scene referred logo that transforms correctly in all viewing condition? Or generate a scene referred Logo that is stable and then tweak the appearance slightly in post?

Proposing an inverse transformation as “no-brainer solution” will lead to “bad practice” assuming that it solves the problem but it won’t really, as the logo could be in a highly unstable state and break when an HDR Version is made, for example. So you have a ticking time bomb in your timeline waiting to explode.

This leads to AR I guess, what if you shine some light on the game scene or want to apply some post fx to the image, the smartphone feed could produce horrible artefacts if touched. Wouldn’t it be better to have a scene referred image that “almost” look like the original but can be graded, added light etc…?

This is really “bad practice” in my eyes. You will undermine the biggest goal of ACES which is the long-term archive. Encouraging people to enter ACES via the Rec709 backdoor is really dangerous in my eyes.

Wouldn’t it be better to rework those Looks to make them really “Scene Referred” and use them as proper LMTs with no dynamic range reduction?

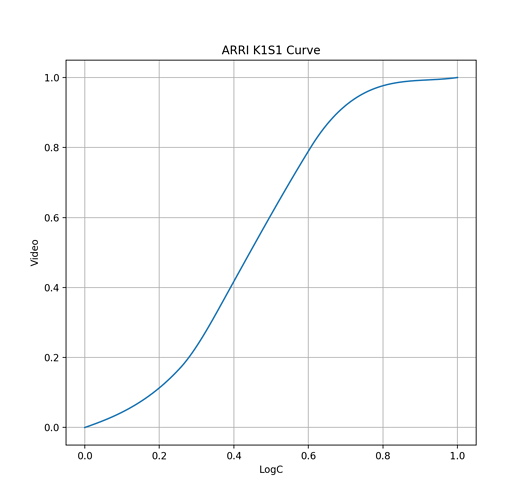

We have made many inverse DRTs over the years, we also have inverted most ODT+RRTs (0.1.1 and 1.0) nicely I guess. We also have two models that are parametrised and have an analytical inverse, guess what: We drive the inverse with slightly different parameters, to gain robustness

Don’t get me wrong: The robust inverse will produce an scene referred image that when viewed forward will look almost identical to an analytical inverse, difference are only visible if you measure the images…

I hope some of this makes sense.

best

Daniele